Learning Hierarchical Orthogonal Prototypes for Generalized Few-Shot 3D Point Cloud Segmentation

Abstract

Generalized few-shot 3D point cloud segmentation aims to adapt to novel classes from only a few annotations while maintaining strong performance on base classes, but this remains challenging due to the inherent stability--plasticity trade-off: adapting to novel classes can interfere with shared representations and cause base-class forgetting. We present HOP3D, a unified framework that learns hierarchical orthogonal prototypes with an entropy-based few-shot regularizer to enable robust novel-class adaptation without degrading base-class performance. HOP3D introduces hierarchical orthogonalization that decouples base and novel learning at both the gradient and representation levels, effectively mitigating base--novel interference. To further enhance adaptation under sparse supervision, we incorporate an entropy-based regularizer that leverages predictive uncertainty to refine prototype learning and promote balanced predictions. Extensive experiments on ScanNet200 and ScanNet++ demonstrate that HOP3D consistently outperforms state-of-the-art baselines under both 1-shot and 5-shot settings. The code will be publicly released upon acceptance.

Method

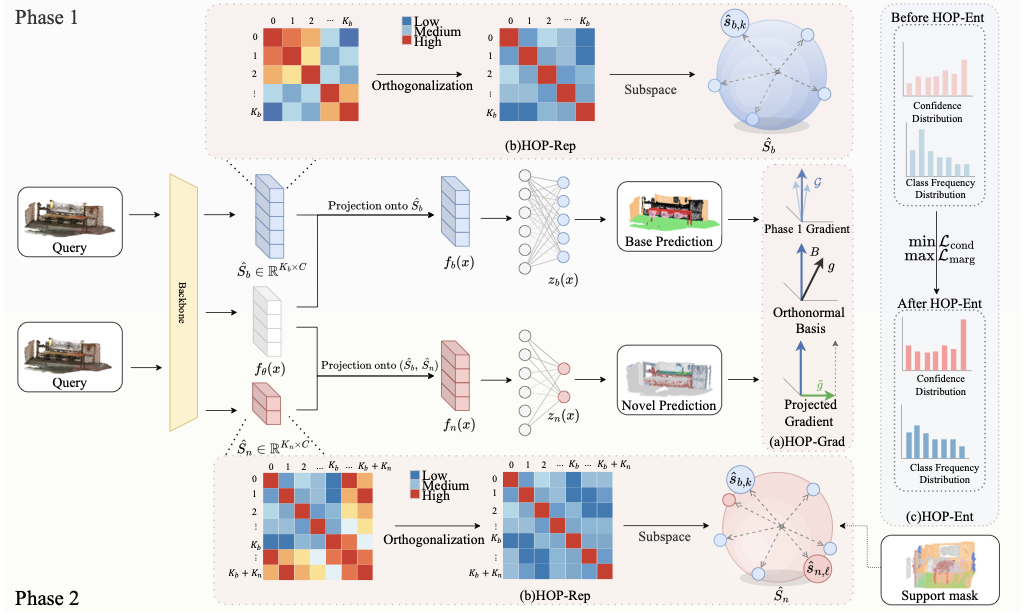

We propose HOP3D, a unified framework for generalized few-shot 3D point cloud segmentation that reduces base–novel interference and improves few-shot adaptation robustness. HOP3D integrates HOP-Net (hierarchical orthogonalization) and HOP-Ent (entropy-based few-shot regularizer).

- HOP-Grad: projects Phase-2 novel gradients onto the orthogonal complement of base-task gradient directions to mitigate base forgetting.

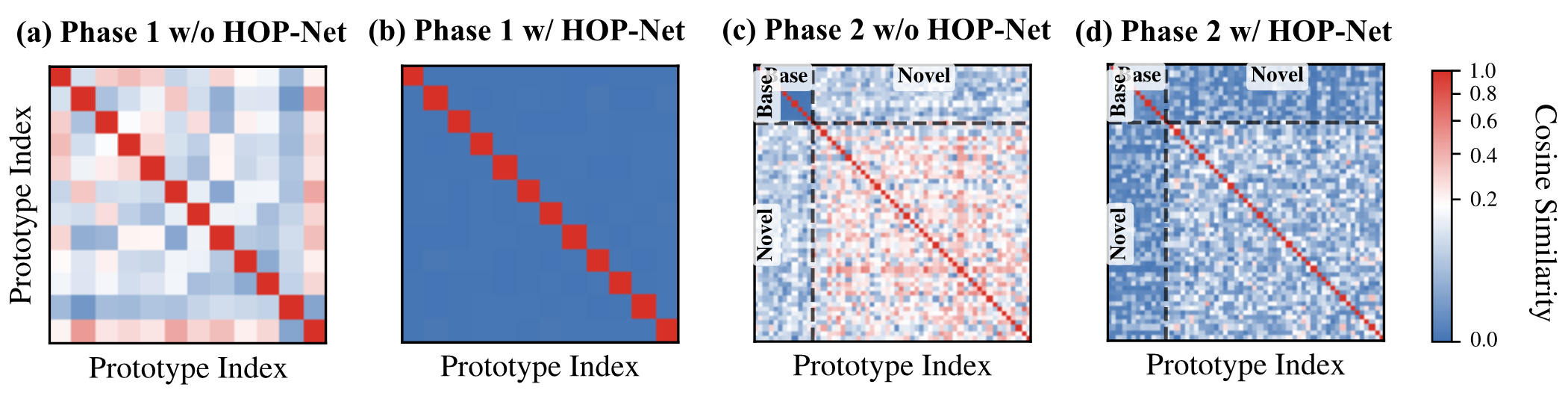

- HOP-Rep: learns orthogonal prototype subspaces for a base/novel representation decomposition and more stable decision geometry.

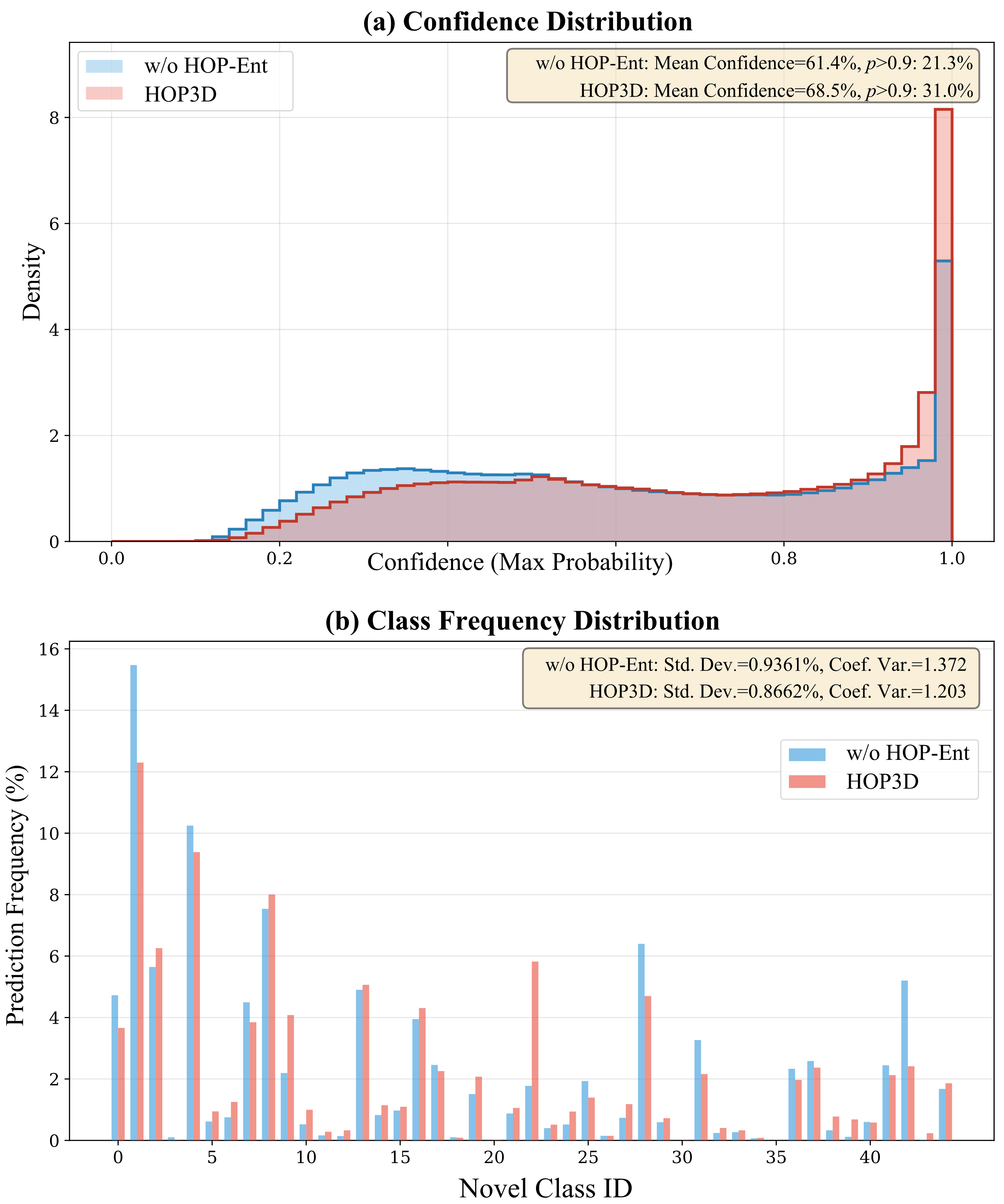

- HOP-Ent: dual-entropy regularization to encourage confident and balanced novel predictions under sparse supervision.

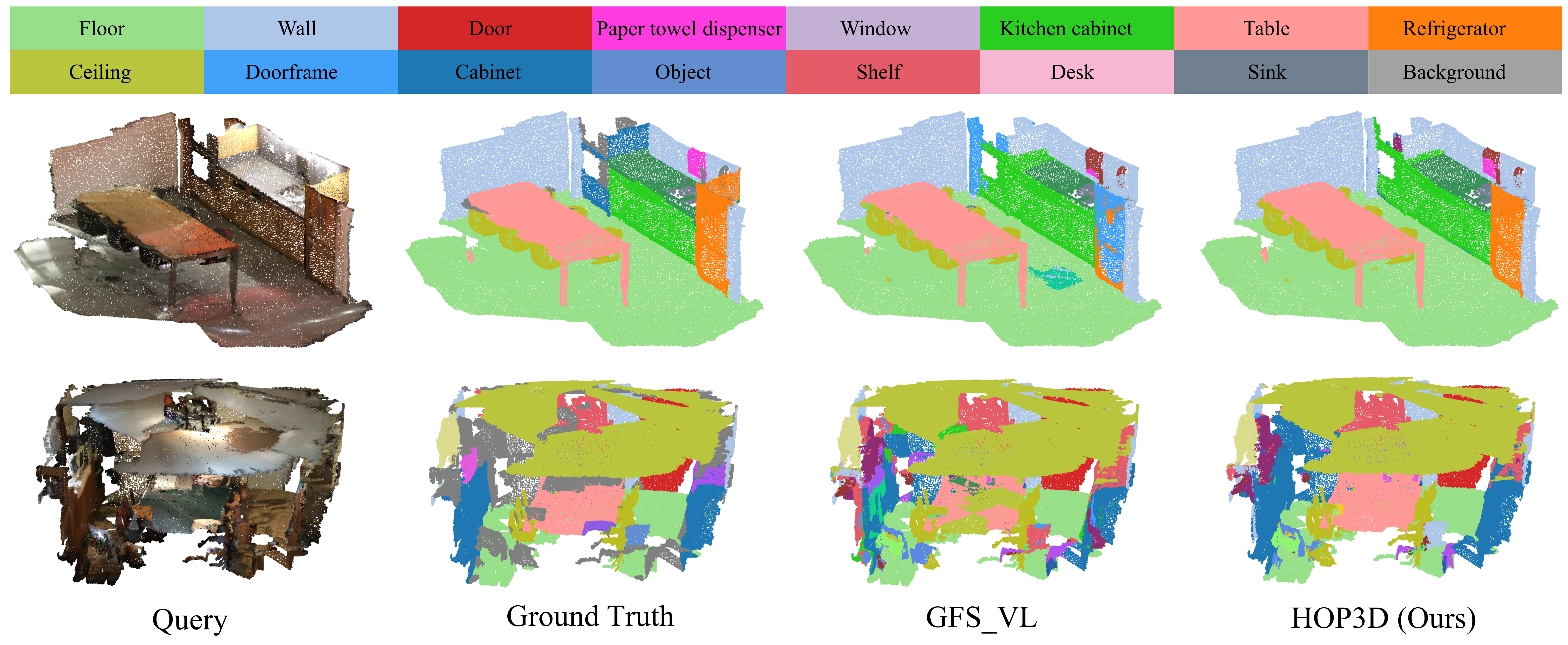

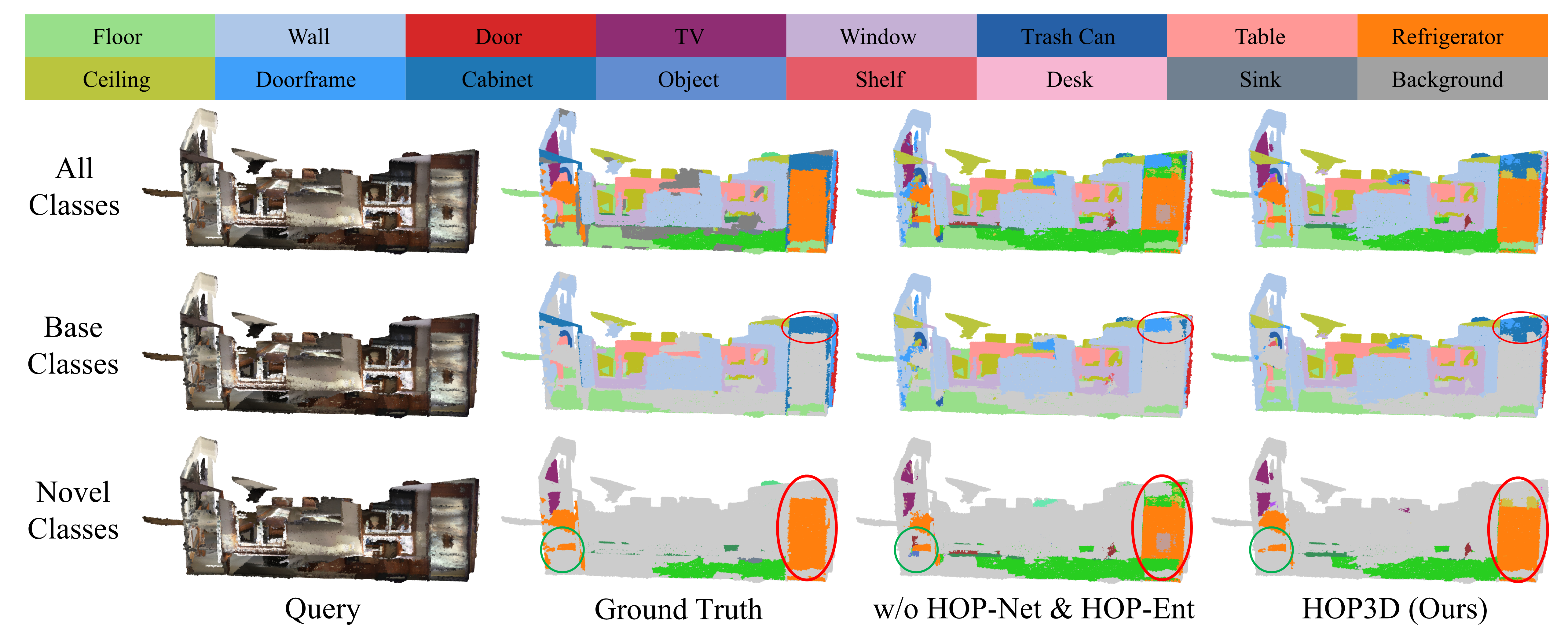

Qualitative Results

Experimental Results

Metrics: mean IoU on base classes (B), novel classes (N), all classes (A), and their harmonic mean (HM). Results for 1-shot and 5-shot on ScanNet200 / ScanNet++.

ScanNet200

| Method | 5-shot | 1-shot | ||||||

|---|---|---|---|---|---|---|---|---|

| B | N | A | HM | B | N | A | HM | |

| Fully Sup. | 68.70 | 39.32 | 45.51 | 50.02 | 68.70 | 39.32 | 45.51 | 50.02 |

| PIFS | 28.78 | 3.82 | 9.07 | 6.71 | 17.84 | 2.87 | 6.02 | 4.88 |

| attMPTI | 37.13 | 4.99 | 11.76 | 8.79 | 54.84 | 3.28 | 14.14 | 6.17 |

| COSeg | 57.67 | 5.21 | 16.25 | 9.54 | 47.03 | 4.03 | 13.09 | 7.42 |

| GW | 59.28 | 8.30 | 19.03 | 14.55 | 55.23 | 6.47 | 16.74 | 11.56 |

| GFS-VL | 67.17 | 31.18 | 38.76 | 42.59 | 67.25 | 28.89 | 36.97 | 40.42 |

| HOP3D (ours) | 67.36 | 34.38 | 41.32 | 45.52 | 68.45 | 31.80 | 39.52 | 43.42 |

ScanNet++

| Method | 5-shot | 1-shot | ||||||

|---|---|---|---|---|---|---|---|---|

| B | N | A | HM | B | N | A | HM | |

| Fully Sup. | 65.45 | 37.24 | 48.53 | 47.47 | 65.45 | 37.24 | 48.53 | 47.47 |

| PIFS | 39.98 | 5.74 | 19.44 | 10.03 | 36.66 | 4.95 | 17.63 | 8.71 |

| attMPTI | 55.89 | 4.19 | 24.87 | 7.78 | 53.16 | 3.55 | 23.40 | 6.66 |

| COSeg | 59.34 | 6.96 | 27.91 | 12.45 | 58.49 | 6.24 | 27.14 | 11.26 |

| GW | 51.35 | 11.03 | 27.16 | 18.15 | 46.71 | 6.63 | 22.66 | 11.59 |

| GFS-VL | 60.49 | 21.40 | 37.04 | 31.61 | 60.02 | 17.90 | 34.75 | 27.56 |

| HOP3D (ours) | 62.40 | 23.70 | 39.18 | 34.34 | 61.72 | 19.23 | 36.23 | 29.32 |

Key Findings

- Consistent novel gains: HOP3D improves mIoU-N and HM over the strongest baseline (GFS-VL) on both datasets under 1-shot and 5-shot.

- Base retention: HOP3D maintains strong base-class performance while improving novel recognition.

- Entropy regularization helps: HOP-Ent improves confidence and class balance during Phase-2 adaptation.

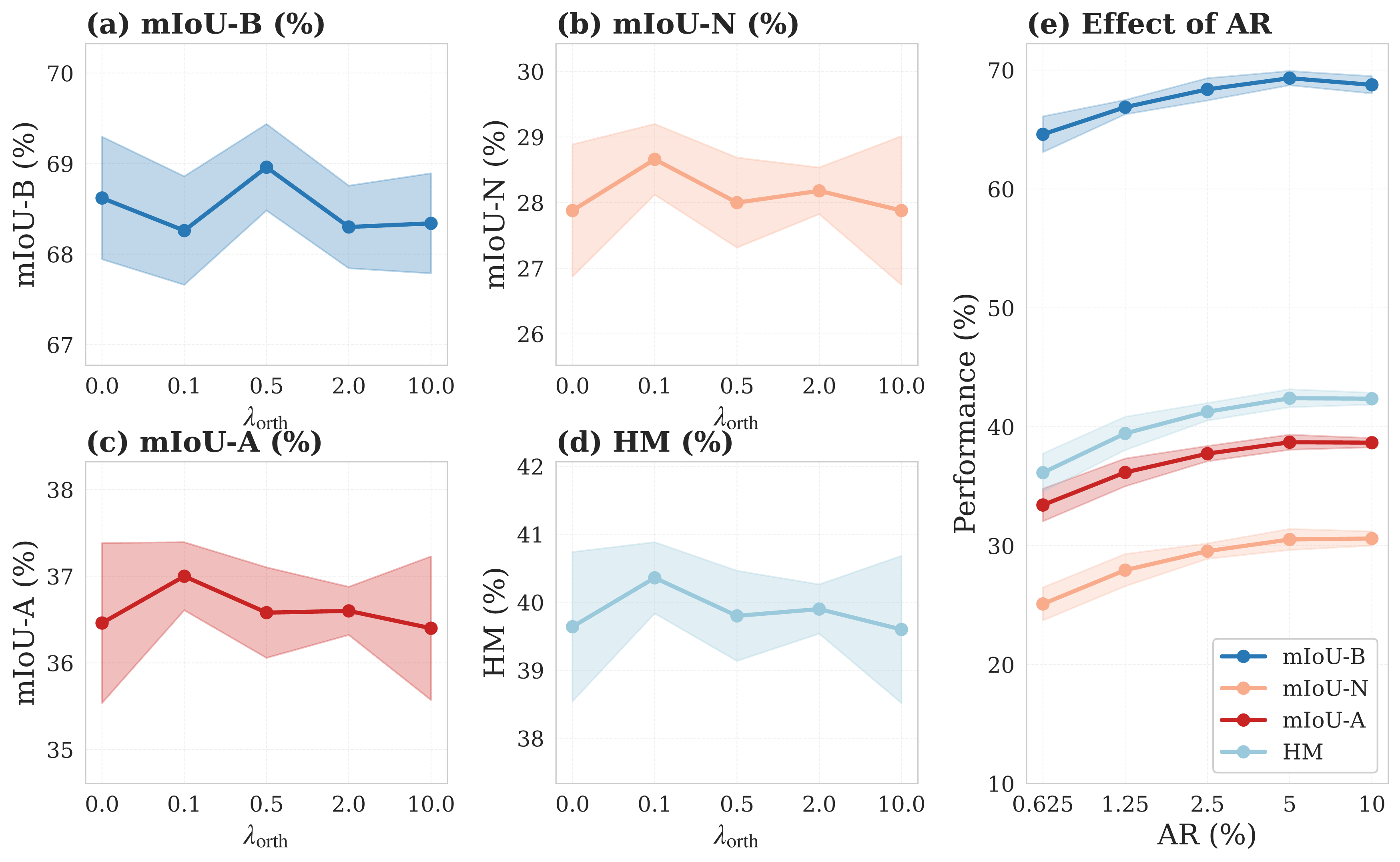

Ablation & Additional Analysis

BibTeX

@inproceedings{HOP3D2026,

title={Learning Hierarchical Orthogonal Prototypes for Generalized Few-Shot 3D Point Cloud Segmentation},

author={Zhao, Yifei and Zhao, Fanyu and Zhang, Zhongyuan and Wu, Shengtang and Lin, Yixuan and Li, Yinsheng},

booktitle={IEEE International Conference on Multimedia \& Expo (ICME)},

year={2026},

url={https://arxiv.org/abs/2603.19788}

}